Where the knowledge goes

A story of burnout and building a knowledge graph that knows my needs

There are 380 files in a folder on my laptop called research-temp8. Or maybe client-context-dump456. I don’t remember what I called this one. I make a new one every day.

A client asks me something I should know. Or something I knew six months ago and can’t remember or find. I open a folder, start dragging things in. Three meeting transcripts. A GSC export from this morning. A few SimilarWeb exports. A SparkToro audience report from a different project that might be relevant. A screenshot from a deck I made in December that I think had a chart about this. Actually a PDF export of the full deck. A paper I read somewhere that said something useful about this exact problem but I can’t remember what exactly.

Then I start a terminal in research-temp8, type claude, and start prompting. Get a partial answer, push harder, the model goes into extended thinking and I start queuing prompts because I’m thinking faster than the model is responding. I watch the model start to drift from the task and from some findings earlier in the session. I get real mad.

Then start a new conversation, paste file paths instead of relying on implied context because the last session got too long, get a marginally better answer, hit compaction, start over. A few hours later I have maybe 70% of what I needed. But I’m not sure I can trust it. The other 30% I figure out by going back through the source files by hand because at some point I stopped trusting I’d fed it the right stuff in the right order.

The client gets the answer. I close my laptop. Headache. I forget about this folder forever.

I’ve done this maybe 300 times in the past year. I’m not exaggerating. I’m also not counting.

This is a story about burnout

Let’s get something out of the way. This is not a story about a workflow or a novel new idea. This is a story about my burnout. And if you run a B2B services business and you’re trying to stay afloat this year you know exactly what I am talking about.

In 2024 the average mid-market client wanted a quarterly strategy deck, a content calendar, a backlinks report, and someone to be on the call when the CMO got nervous.

In 2026 the same client wants all of that plus an opinion on AI search visibility, a thesis on why SEO isn’t dead, a position on which LLM their content is training, a forecast of what their organic traffic will look like as AI Overviews fully normalize, a competitive analysis that includes Perplexity citations and ChatGPT mentions, a content production rate that’s two to three times what it was, a brand-safety review of any AI-generated copy, and a strategy for getting cited in answer engines they didn’t have to think about two years ago.

The budgets for all of this are the same as they were in 2024. In some cases lower, because procurement teams have absorbed the narrative that AI makes everything cheaper and have priced their assumptions accordingly. The budgets did not get the memo that the work tripled.

The way most agencies have responded is to absorb the gap with founder hours. Mine did.

I have spent the last year working myself to a state I would describe as “worrisome to my wife”. I have answered Slack messages at 1am and again at 6am. I have done the prompt-bashing folders-I-forgot thing on Sundays, Mondays, Tuesdays, and all the days. I have made strategy calls work by sheer brute-force preparation, reading every relevant document the night before because I couldn’t find a faster way to get the context into my head. And sometimes made them work through not having the context… that’s what a good team will do for you. I have delivered good work and I have delivered not good work.

99 problems and being a person is all of them

A problem is that the agency knows things, the agency keeps forgetting things, and I keep being a manual index that holds my things together. The problem is that the manual index is a person with a family and a body and a finite number of hours and rest needs and food needs and all the things a person needs.

I knew, somewhere in the back of my head all year, that I was doing the same retrieval task over and over, with mostly the same source material, by hand, in a folder called research-temp. I knew that if I could find an extra week I could probably build something that would do this for me. I never had the extra week. Every week the client work expanded to fill the available time and then expanded a little more.

Jamming triangles into circle-shaped holes

I had been thinking about knowledge graphs for a while in the way you might think about something when you don’t yet know what to do with it - like finding a paperclip on your living room floor. A process engineering brain looks at the work the agency produces and notices, almost involuntarily, that the structure has more variance in shape than the tools we use to manage and build it. I would think about this on a Sunday and put it down. I would think about it again the following Sunday. This started in 2024.

Then I went to BrightonSEO San Diego and caught a talk by Martha van Berkel. Martha runs Schema App and has spent more time thinking about applying knowledge graphs to unstructured data than anyone I know. Her talk made the thing I wanted to do impossible to not think about daily.

So I went home and did something I never have done, which is send a cold email to a stranger I had no business expecting a reply from. I told her I had an idea I thought was worth building and asked if she would be open to collaborating on it. The idea was, in the loosest possible form, the thing that has now become the thing I’ll talk about a bunch below.

Wisdom: People who know what they’re doing are busy being people who know what they’re doing.

She gracefully did not engage with the collab pitch, which in retrospect is the only correct response to a stranger asking you to co-build something that does not yet exist. She did say yes to the other part of the email, where I had asked if she would come speak at MKE DMC, the monthly Milwaukee marketing meetup I run.

Martha’s non-response taught me that the people who know what they’re doing are busy being people who know what they’re doing. They are not available to ride along on a stranger’s hunch, and they shouldn’t be. The person who was going to build this was going to have to be me.

I kept daydreaming about it for a year after. I didn’t get to decide when my idea would become buildable. But the tools caught up past last winter, and after a summer of hard vibecoding in Loveable, I fully immersed myself in Claude code and even hit the first Clawdbot wave.

“El”

The thing I have built nine times is now called El. El is what would have happened if I had built the system instead of the folder, two hundred times ago.

El monitors everything.

Every Sunday evening a pipeline I built pulls live data from Google Search Console, GA4, Ahrefs, and I can’t give away the secret sauce. It computes the week-over-week and year-over-year deltas. Sends to a model to generate a narrative for each notable thing that happened in the past week, including context about the weather, local news, etc. It then sends personalized emails to each account manager. This processing takes about 5-6 hours locally, and there is no human involved. I built the ETL so the LLM reads the numbers but doesn’t get to touch them. Ever.

When a meeting is done, an XML transcript is sent through the pipeline El ingests it. Within a few minutes it’s parsed the file, figured out every piece of structured data it should pull, every decision, every commitment, every open question, every data point someone said out loud that isn’t written anywhere else. Files it into the storage. Done.

And that’s just the ingest.

A part that’s super cool is what happens after El connects the meeting to the performance data from that same week. Connects the commitment someone made on the call to whether anyone logged hours against it, finds the deliverable, and waits to monitor performance. It connects the ranking drop a client mentioned to the Ahrefs data that confirms it and the Google update that maybe caused it. Connects two clients in different industries who have the same indexation problem and don’t know about each other.

Meetings are one of many source types. Industry news, Google updates, blog posts, client briefs, style guides, and internal docs. Performance data doesn’t get extracted at all. You don’t run a row of data through an LLM. Just read it!

All of this feeds a knowledge graph where concepts are nodes, relationships are edges, and patterns surface without anyone asking or even expecting them. And underneath all of it, an autonomous runtime watches for things that should be surfaced. A revenue drop. A missed deadline. A ranking loss that lines up with an algorithm update two of our clients got hit by. El doesn’t wait for me to open a folder and start dragging files in. El is already looking.

What you get when you do what I am explaining here is a thing nobody at any agency has, because nobody has connected all the rooms in the house before. A view of the whole damn picture at once.

The first time I ran El’s pattern layer against a test corpus, it found a cross-client pattern I had deliberately planted. Two different fake clients had an issue causing pages to fall out of Google’s index. Different industries and different team members, different details, but the same problem underneath. The fix for one client was the same fix at the other. In real life, six weeks apart, that’s exactly what would have happened, and we would have solved it twice.

El found the pattern without being told to look for it. It surfaced the connection as the most central concept in the corpus. It named the pattern and grouped both client instances under it as a single thing. It generated a wiki entry with an audit trail showing what it had extracted versus what it had pulled from our APIs and our warehouses versus what it had inferred.

This is what the folders were always trying to be and could never become, because folders don’t notice things.

The value is not in having a knowledge base. The value is in having a system that knows your verbs. Needs are your verbs.

I’m aware that this description sounds like science fiction if you’ve never built something like it. The technology is not new. Knowledge graphs are old. LLM-based extraction has been around for a couple of years. The infrastructure to expose all of it through a single endpoint the team can query from their existing chat tools is a few months old. What’s new is well…vibecoding.

About the name

El stands for Ellie, which is short for Elliott, which is my oldest daughter. The next system in this family will be named for my younger daughter.

I named them this way because the past year has been simply brutal. Every hour I spend prompt-bashing a folder on a Sunday is an hour my daughters do not get. Every late night answering Slack is a morning that I’m not helping them put their socks on “the good way”. The agency has been taking the hours from somewhere, and that somewhere is the people I love. If I’m going to build infrastructure in the evenings instead of doing literally anything else, the infrastructure should be named after the people the infrastructure is supposed to give the hours back to.

There is a less sentimental reason too. Naming infrastructure after the people you love raises the bar on what you’re willing to build and maintain under that name.

El is further along than it was when I started writing this. The ingest pipeline has processed a full year of backfilled meetings. 574 client meetings were parsed, 584 non-meeting files processed across industry news, Google updates, Google patents, blog posts, client briefs, and you get the picture. The pattern finder ran against a test corpus and found the patterns. The extraction profiles are tuned and versioned.

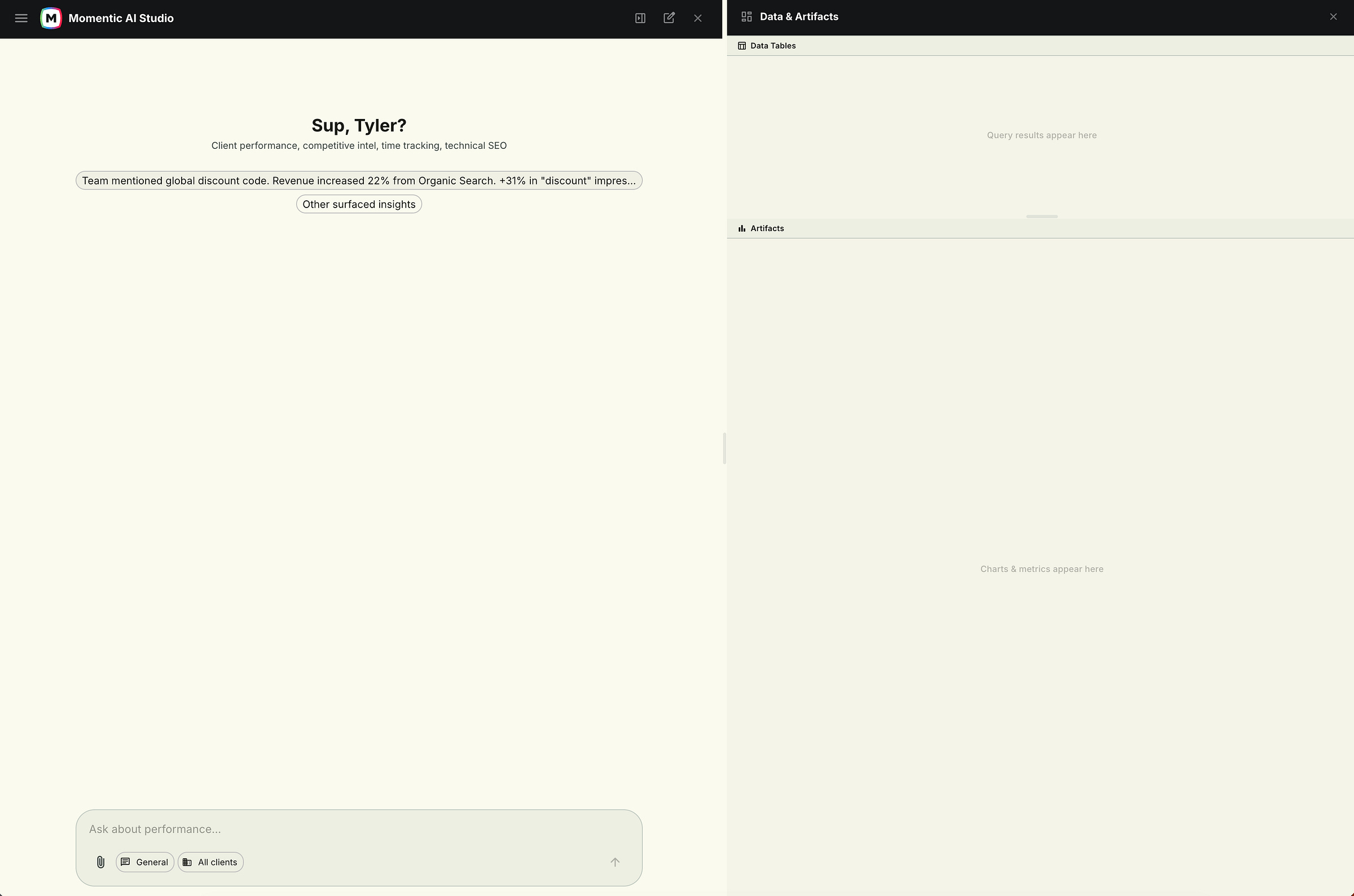

Then I needed a way to see whether the weekly data pipeline was actually producing useful output in the context of clients. So I built a dashboard. Then the dashboard needed a chat interface so I could ask it questions about the data. Then the chat needed tools so it could pull live GSC and GA4 and Ahrefs and all the other data instead of just reading cached numbers. Then the tools needed artifact rendering so the answers weren’t just text walls but actual charts and tables the team could read. Then I needed to trust the output, which meant the LLM could not touch the data pipeline.

So I built it so there’s data piping in through a homebuilt ETL that the LLM can interpret and create additional artifacts from, but cannot alter. The numbers are the numbers. The model reads them, reasons over them, and presents them. It does not get to decide what they are.

That dashboard is now a full internal tool called Momentic AI Studio. It has 30+ data tools, an artifact system that builds charts, graphs, reports, audits, learns from conversation history, a skills builder, row level scoping, and PDF export. I rolled it out to the team this week.

I did not plan to build this. I’m glad I did though.

The services market is asking agencies to do two to three times more work for the same money as 2019, and there is no tool on the market that gives time or money back. El will not change what clients ask for. El will not change what budgets look like in 2026. El will not unbreak my industry. El will initially save me 15-20 hours per week.

And El gives out clients get a better agency, because the version of me that isn’t spending Sundays on research-temp7 is a version that does all things better. The people who hired Momentic hired us for judgment, not for retrieval. Well not in this sense.

The agency stops losing knowledge. Not perfectly. But enough that the next hour I would have spent in the terminal is an hour that goes somewhere better.

Saturdays are for the girls.

And that’s why they’re named in the readme.